“Oh my god. Oh my god,” I yelled as I looked at my own face on someone else’s body. It was all there: my five o’clock shadow, my goofy grin, even the bags under my eyes.

I was on a Microsoft Teams call interacting with this deepfake version of myself in realtime. Ordinarily the other person on the line looks nothing like me, but by using a gaming laptop and a sought-after, cutting edge piece of software for scammers, his face morphed into mine. My deepfake pinched his cheek, covered his nose, and stroked his chin, all without the illusion breaking.

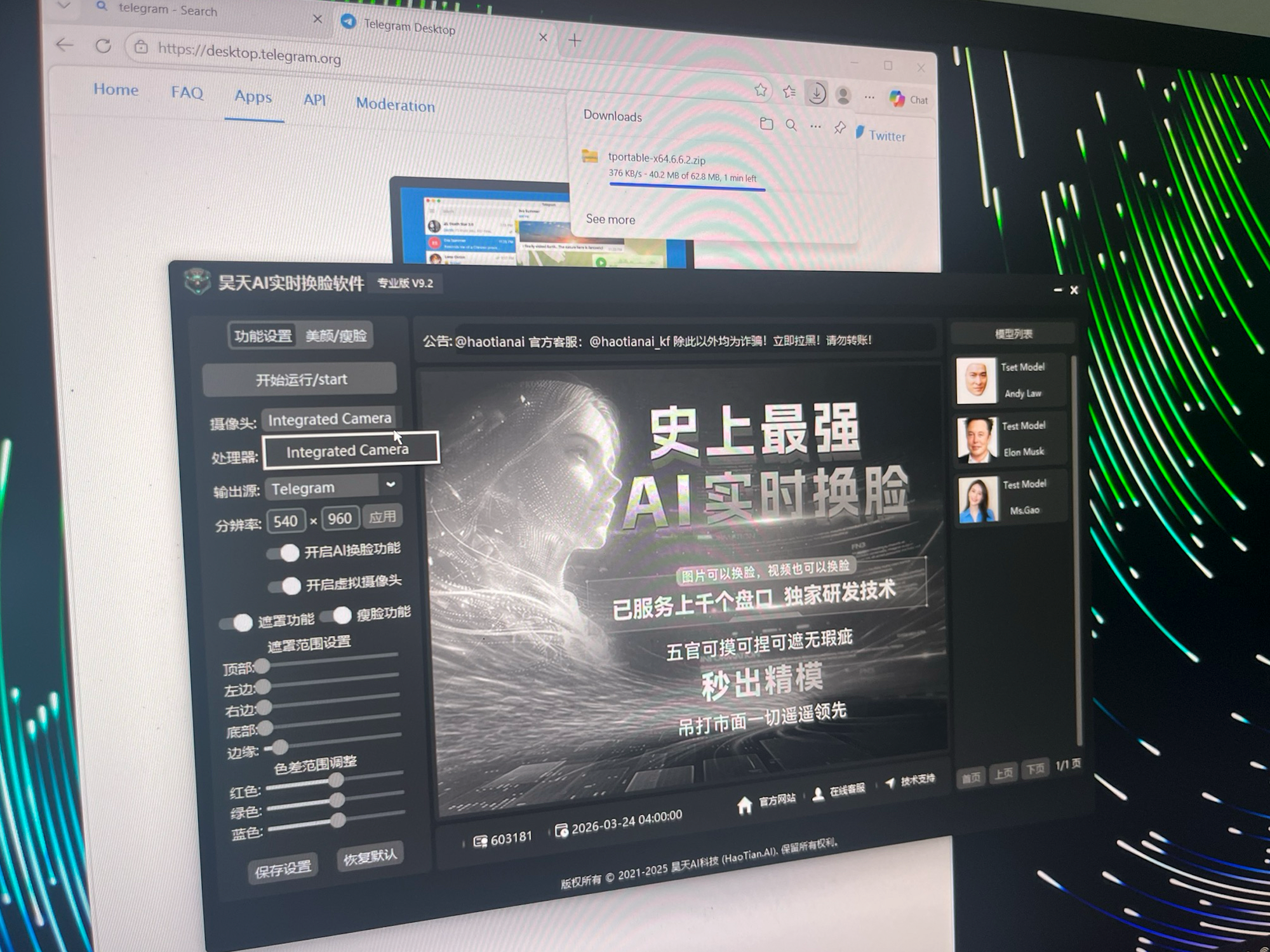

Whereas video deepfakes used to be about superimposing someone’s face onto an existing video, the tool I was using promised something else: the ability to shapeshift into someone—anyone—live during a video call. After weeks of back and forth with the Chinese-language scammers selling the tool, called Haotian AI, I had obtained a copy of the software. Haotian AI is built to work specifically with platforms we all use everyday: WhatsApp, Microsoft Teams, Zoom, TikTok, Instagram, and YouTube.

Haotian AI marks the next stage in deepfake scams and fraud, one that the public and tech companies may not be ready for, where criminals are able to change their appearance in real time to trick people, including Americans, into handing over their money. Romance scams, tax fraud, virtual kidnappings: all stand to be amplified by live deepfake software which continues to improve in quality.

404 Media’s experimentation with Haotian AI marks the first time a journalist has managed to test this software to see how it really works, how effective it is, and what its existence means for the present and very near future of scams. Our investigation finds Haotian AI demonstrates its tool as a way to impersonate at least one U.S. police department. We link Haotian AI to Chinese money laundering networks and the ecosystem providing services to massive scam compounds in South East Asia, and find that Haotian AI has brought in more than $4 million dollars for its creators. Our investigation also reveals Haotian AI is likely based on open source face swap tools, meaning the true value of the software is its sophisticated technical support. With that, even the least tech-savvy criminals can now access realtime deepfake software, opening up the possibility for more fraudsters around the world to use this powerful technology.

“It is far ahead of everything on the market,” the user interface of Haotian AI reads.

0:00

A clip of a Microsoft Teams call using Haotian AI.

Haotian AI’s realtime deepfakes can be particularly impressive, able to handle adjustments in lighting and objects appearing in front of the subject’s face, according to demos the company has posted on Telegram. One demo video shows an Asian woman magically transforming into actor Gal Gadot. In the demo, the deepfake Gadot blows a kiss, covers one of her eyes, and rapidly swipes her hand past her face, with the software not glitching once. Other tools sometimes fail when a user touches their own face. When they do so, the software malfunctions and shows the real person underneath. In the demos, Haotian AI keeps the illusion going, though. Another demo video shows a realistic, albeit dewy skinned, Elon Musk and Jackie Chan.

In a direct and live demonstration with me over the messaging app Telegram, a Haotian AI technician showed in real time how the software can also make a subject’s lips thicker or thinner, or their jawline sharper or more rounded. The user interface of Haotian AI also lets customers adjust the size of their deepfake’s nose, use an “acne removal” feature, and change the shape of their eyes. For an effective deepfake, some configuration may be required, according to 404 Media’s own tests.

And Haotian AI may not just beat the human eye, but tools designed to detect deepfakes as well. Xception is a deepfake detection model; in a paper published last June, researchers found it “struggled” to detect Haotian AI-generated deepfakes.

“While Xception hit 89.1% accuracy on the control stuff, it misclassified almost 100% of the Haotian samples as ‘authentic,’” Charles Fross, one of the authors of that paper, told 404 Media in an email. “Haotian’s work is shockingly convincing, especially with how it handles facial and body movements.”

There are now a variety of ways to produce deepfakes, but generally they work by training a machine learning model on images of a person’s face, then mapping that face onto another face in a video, frame by frame, a process that could take seconds, minutes, or more, depending on the method on how long the video is. Realtime deepfakes work similarly, but are more sophisticated because they have to track a face and map the deepfake face onto it moment to moment.

0:00

One of Haotian AI’s demonstration videos.

Haotian AI is squarely part of the sprawling Chinese-language crime ecosystem that provides services to multibillion scam compounds in South East Asia. In practical terms, these networks exist on Telegram. Once you join a central channel which lists vetted services on offer, you can access what feels like a limitless array of illegal products: Money laundering. Malware. “High-end” escorts. These Chinese-language Telegram networks are easily accessible, dangerous, and massive. Researchers have said one market, called Xinbi Guarantee, has facilitated a massive $21 billion in transactions. Authorities have attempted to sever another, called Huione Group, from the U.S. financial system.

When WIRED reported on Haotian AI in December and approached Telegram for comment, the main Haotian AI Telegram channel became inaccessible.

But the company has continued to operate, push significant updates, and sell its technology, 404 Media found.

‘COME IN’

I found Haotian AI’s Telegram account and, through Google Translate, said I was interested in buying the technology. For weeks, these conversations didn’t go very far. Someone representing the company would ask if I had a powerful PC to run the software, I would say yes, and the person would not reply. Something shifted in March, and the company became a lot more responsive. I made sure to log on to Telegram when Haotian AI’s representatives were online.

Haotian AI is based out of Cambodia, according to cybercrime fighting NGO Chong Lua Dao. Hieu Minh Ngo, a former hacker prosecuted by the U.S. for identity theft who now works to combat fraud with the organization, shared screenshots with 404 Media showing Haotian AI offering physical installation of the software “in some areas of Cambodia.” Ngo also shared a video which appears to show Haotian AI’s customer support staff installing the software in an office building in Phnom Penh.

In my chats with Haotian AI, the representative sent over a table explaining the PC specification requirements for Haotian AI. The specs resembled a moderate to high gaming PC: an i7 processor; 16GB of DDR5 RAM; and, most importantly, an Nvidia 4080 SUPER graphics card. Having a card like this, with a powerful parallel processing architecture, as with other forms of generative AI, is the key to unlocking an effective realtime video deepfake.

The company then asked for an array of screenshots that showed we had access to a PC that matched or beat these specifications. I asked 404 Media’s Emanuel Maiberg to take each of the requested screenshots on his beefy gaming PC. After several days of relaying this information over, which may have been a tactic to ensure we weren’t timewasters, Haotian AI agreed to take us to the next step.

“Come in,” the company contact said after they made a dedicated Telegram group chat to connect us with other parts of Haotian AI. That group included myself, the person I had been speaking to, and two other Haotian AI Telegram contacts, including one referred to as a “technician.” They would run me through how to install the software.

0:00

Image: a video of a Haotian AI advert. Provided by Chong Lua Dao.

That person uploaded four files: the Haotian AI client itself split into three password locked files, and a piece of remote access software called AnyDesk. As it turned out, I wouldn’t be doing any installing myself; as part of its service, Haotian AI wanted to remote into Emanuel’s PC to perform the installation itself. “You can sit in front of your computer and watch the whole thing while I’m remotely connected,” one of the Haotian AI representatives said, according to Telegram’s automatic translation of the chat.

As journalists who handle sensitive information all the time, we did not feel comfortable letting a criminal-adjacent group have free access to one of our computers, where who knows what they might do. Tom Cross, head of threat research at cybersecurity firm GetReal Security, agreed to let us test on one of his computers instead. Cross downloaded the remote access software and let the scammers in.

Cross said he watched as the technician created a new partition on the hard drive, turned off multiple Windows security features including the firewall, downloaded and installed WinRAR, and configured a copy of Windows Defender that was included in the provided files. The technician then uncompressed the Haotian AI files on that new partition, installed and logged into the Haotian AI software, and downloaded an update from Nvidia for some specific drivers. Finally, the technician installed Telegram. All of that is fairly technical stuff that an ordinary user or scammer may not know how to do properly or quickly. The whole process was over in a few minutes.

To signify I was a new customer, the company changed the group chat’s profile picture to Haotian AI’s distinctive wolf logo, and the English text “New!” plastered underneath.

The company then wanted to call me on Telegram to do a live demonstration of the tool. Understanding they were likely speaking to a non-native speaker, a Haotian AI representative asked, “Do you understand Chinese?” I explained I could only text and wouldn’t talk. That wasn’t an issue.

0:00

A demonstration Haotian AI gave to 404 Media.

The technician then called me on Telegram and texted what he was doing while demonstrating the software. I don’t think I ever saw the technician’s real face. Instead when he started the call he was using the software to look like Andy Lau, the prolific Hong Kong actor. At one point, he changed himself into a woman too. The technician, likely based somewhere on the other side of the world, smothered his mouth with his hand and covered his eye. This live demonstration was on par with the previously recorded Gadot one.

Haotian AI gave me free access to the tool for a day, but only with the ability to turn into a few preselected faces. For a custom model that would let us transform into anyone we wanted, we needed to provide a series of photos of the target and, of course, pay. Haotian AI quoted us $1,998 a year for the software, and $498 per custom model. The company wanted to be paid specifically in TRON (TRC20), a version of the cryptocurrency Tether which runs on the TRON blockchain. Unlike volatile Bitcoin, Tether is tied to the U.S. dollar. We sent the requested amount of cryptocurrency.

The fact that Haotian AI still sold the technology to a non-Chinese speaker shows the tool may not be limited to that regional cybercrime ecosystem. Haotian AI’s deepfakes are especially impressive when compared to other tools that scammers in, say, Nigeria are using. The only thing slowing Haotian AI from spreading may be the language barrier (or the cost in some cases).

I shared the payment address with Chainalysis, a cryptocurrency tracing company, to ask what insight they had on it. “The wallet provided has processed over $253K between January 6, 2026—present. Overall, Chainalysis has identified over $4M in total in-flows to Haotian wallets dating back to October 2023,” Andrew Fierman, head of national security intelligence at Chainalysis, said in an emailed statement. “These wallets have interacted extensively on-chain with HuionePay and with Chinese-language money laundering services and scam technology vendors—such as digital alteration and translation providers—intended to identify, target, and manipulate victims.”

BUILDING A FACE

To build a custom model, Haotian AI asked me to provide three to eight photos of the target face. They wanted photos with the subject looking straight at the camera; no obstructions to the facial features like hair covering the face; no showing of teeth or strange expressions; and no heavy Photoshop editing.

At my desk, I took nine selfies. For some of these, I deliberately wore my glasses just to test whether Haotian AI would ask me to take the photos again. After this, the Haotian AI customer support representative asked me to join another dedicated group chat on Telegram. This chat, the representative explained, was “a dedicated technical support group set up just for you.”

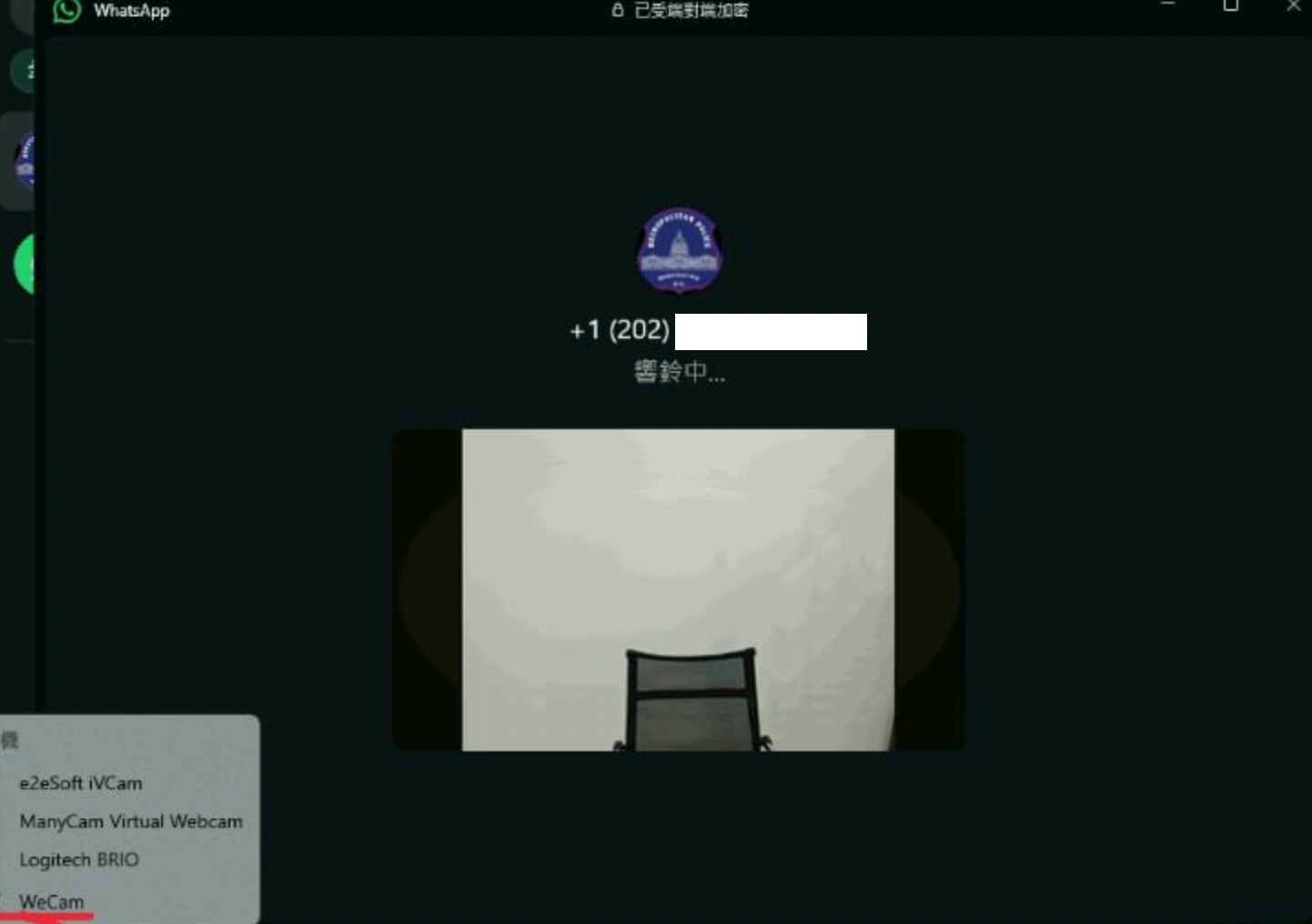

Someone in that group asked me what chat platforms I wanted to use Haotian AI with. I said WhatsApp and Zoom, and Haotian AI sent over instructions for feeding the software’s output into live calls on those platforms. Essentially, Haotian AI acts as a virtual camera a user can select when using Microsoft Teams or one of the other platforms.

While providing instructions for how to configure Haotian AI with WhatsApp, a customer support worker sent a couple of related screenshots. One of them showed a WhatsApp video call including the distinctive logo of the Metropolitan Police Department (MPD) in Washington D.C. The MPD told 404 Media it has not received reports of scammers using deepfakes, but in November the MPD warned the public that fraudsters were impersonating MPD officials in video calls.

Scam compounds in South East Asia sometimes build elaborate, Hollywood-style sets to trick victims into thinking they are talking to the authorities, including rooms, flags, and scammers wearing uniforms. They are often run by Chinese organized crime figures.

Images showing the UI of Haotian AI, the photos 404 Media sent to the company, and a WhatsApp demo including the logo of the MPD. Images: 404 Media.

404 Media asked multiple companies that Haotian AI targets whether they have mitigations to stop these sorts of realtime deepfakes. A Zoom spokesperson said, “We recently announced that Zoom is further enhancing meeting security with integrated deepfake risk detection offering, providing real‑time alerts when synthetic audio or video is detected. This tool will launch this summer.”

Meta did not answer the question directly, and pointed to its other work targeting infrastructure used by scam centers, including taking down tens of millions of Facebook, Instagram, and WhatsApp accounts.

Microsoft, TikTok, and Google acknowledged requests for comment but ultimately did not provide statements.

On the same day I shared my selfies with Haotian AI, someone in the group chat said my custom model was ready. The Haotian AI software updated automatically, and now we could finally test the software with my own face.

First we tested the software with GetReal’s Cross. The results were poor, at best. I could make out my haggard eyes, a bit of my facial hair, but the face was digitally stretched across Cross’ who has a different build to me. Cross was also in a hotel room with quite dark lighting.

For the deepfake to be convincing, a scammer needs to put more work in, with ideal lighting conditions and a model whose face structure somewhat resembles that of the target. “Since the AI merely swaps facial features, if the model’s face shape differs too significantly from that of the character design, the resulting output will be suboptimal,” Haotian AI’s customer support told me in a chat.

When we instead tested the tool with Ian McGrew, a product manager at GetReal Security, the results were much, much better. His face size is closer to mine. That said, McGrew’s facial features are really nothing like my own.. But, with McGrew sitting in a Starbucks on the gaming laptop over public WiFi, Haotian AI turned his face into mine.

A comparison showing McGrew on the left, and the deepfake on the right. Images: 404 Media.

I opened the Microsoft Teams call to test the software. Immediately upon joining, I was greeted by my own face. The deepfake of me cheekingly had one eyebrow raised, then smiled and waved after I started shouting “oh my god, oh my god.”

Like in Haotian AI’s own demo videos, I asked McGrew to touch his face, pull on his cheeks, and cover his eyes. Although the deepfake wasn’t as pristine as the, say, Gadot demonstration video, it still produced a convincing result.

There were some limitations. Haotian AI can handle a subject swiping their hand in front of their face, but only if their fingers are all together, making a single solid object. With the fingers spread out, the deepfake can warp and distort. This appears to be a broader problem with realtime deepfakes at the moment, so much so that people on the lookout for scammers are asking them to perform a so-called three finger test. Sometimes when McGrew put his fingers towards his eyes, it made the eyes bulge.

Cross also examined the Haotian AI files themselves. “The deepfake software includes some popular AI libraries for face selection, face swapping, and post-swap enhancement, including ‘inswapper’ a face swapping ML model that is available on HuggingFace and is included in many popular open source face swapping tools, such as FaceFusion,” he told me.

Inswapper is maintained by a company called InsightFace. InsightFace offers both an open source version of inswapper and a paid product for enterprises. “The open-source models we release on GitHub (including inswapper) are strictly intended for non-commercial research and academic purposes. Any use of these models in a criminal context, such as the ‘Haotian AI’ software you mentioned, is a direct violation of our intended use cases and the spirit of our licensing terms,” a spokesperson for InsightFace told 404 Media in an email. “For any legitimate commercial application of our technology, we provide a formal licensing process. This allows us to ensure that the technology is being used by vetted organizations for ethical and legal purposes. This process is fundamentally different from the anonymous, unauthorized misuse of open-source research code by third parties.”

The company said that the “dual-use” of AI is a challenge for the research community. “We have no control over how anonymous actors integrate open-source research code once it is published for the scientific community, but we do not condone, support, or have any affiliation with criminal entities,” InsightFace’s spokesperson said.

0:00

A clip of a Microsoft Teams call using Haotian AI.

If Haotian AI is at least in part relying on open source face swapping tools, that means the company’s value really comes from its technical support. A non-technical scammer—a criminal who may know how to trick people, but doesn’t grasp the minutiae of deepfakes—is now able to digitally alter their appearance in real time.

Chong Lua Dao, the NGO, told 404 Media that scam compounds use many different deepfake tools. Sometimes that can be free, open source programs, those developed internally, or bought from contractors, the group said.

In 404 Media’s own survey of the Chinese-language ecosystem, some other tools include Panda AI, which says it can be used with WhatsApp mobile; Xiaomi Technology AI which has pricing close to Haotian AI’s; and Ark Technology AI which offers similar capabilities.

For years, scam compounds in South East Asia have operated almost openly, with local authorities doing little about the massive influx of criminals and human trafficking victims forced to work inside them. More recently some agencies have closed compounds, letting journalists physically walk their grounds.

In a court record filed last month, an FBI Special Agent detailed how the agency has interviewed victims from scam compounds and parsed a mountain of evidence seized from the sites. As part of that case, U.S. authorities charged two Chinese nationals, seized $700 million in cryptocurrency, and shut down a Telegram channel used to lure people to work at a compound in Cambodia.

Which brings up the question of whether people providing technological services to these compounds might also be targeted by authorities. “Prosecuting the application providers is tricky. The technology itself isn’t the crime; the relationship is. There has to be evidence that the seller knew, or willfully ignored, that their customer was a scam operation,” Erin West, a former prosecutor and now founder of Operation Shamrock, an organization focused on educating people about, and disrupting, organized scam groups, told 404 Media in an emailed statement.

“Real-time deepfake software isn’t ending up in scam compounds by accident. If prosecutors can prove knowledge or willful blindness, these companies belong in court right alongside the operators they enable. That’s how you go after the supply chain instead of chasing victims one stolen retirement at a time,” she added.

The FBI recently shut down a different provider that was allegedly using “neural networks” to generate realistic photos of fake ID documents. In February 2024, 404 Media revealed the existence of OnlyFake, where users could pay a small amount of cryptocurrency to make photos of fake driver licenses and passports. Two years later, the U.S. Department of Justice announced it had charged the site’s creator, a Ukrainian national called Yurii Nazarenko, who then pleaded guilty to fraud and conspiracy-related charges.

In response to a detailed list of questions from 404 Media, a Haotian AI representative simply replied “OK” in Chinese.

Haotian AI is expanding into other areas even more unambiguously focused on fraud. One of those is a tool to help bypass know your customer, or KYC, checks. Often people making an account online will need to take a selfie and upload a copy of their ID. Haotian AI’s new tool promises to let customers circumvent those checks by controlling a persona looking into a virtual camera.

“Hello! Due to business expansion, our company is launching new products, including customized facial recognition technology and KYC verification. New and existing customers are welcome to inquire. Free testing is available! Thank you for your support and trust,” Haotian AI wrote in a Telegram announcement in April. In the accompanying demonstration video, a user appears to bypass a KYC selfie check by controlling a video of a woman.

Haotian AI ended the announcement with this: “We wish you continued success and prosperity!”