A Cybertruck owner in Texas is suing Tesla for $1,000,000 in damages for “ grossly negligent conduct” following an accident on a Houston highway that involved the vehicle’s self-driving feature. According to the lawsuit, Tesla is to blame for the crash because CEO Elon Musk has oversold the truck’s ability to drive itself.

As originally reported by the Austin American-Statesman, Justine Saint Amour bought a Cybertruck from a used car dealership in Florida and drove it until it crashed on a Houston overpass on August 18, 2025. That summer day, Saint Amour was driving down Houston’s 69 Eastex Freeway with the vehicle’s full self-driving (FSD) mode engaged.

“Something terrifying happened, without warning, the vehicle attempted to drive straight off an overpass,” Bob Hilliard, Saint Armour’s attorney, told 404 Media in an emailed statement. “She tried to take control, but crashed into the barrier and was seriously injured—mostly her shoulder, neck, and back.”

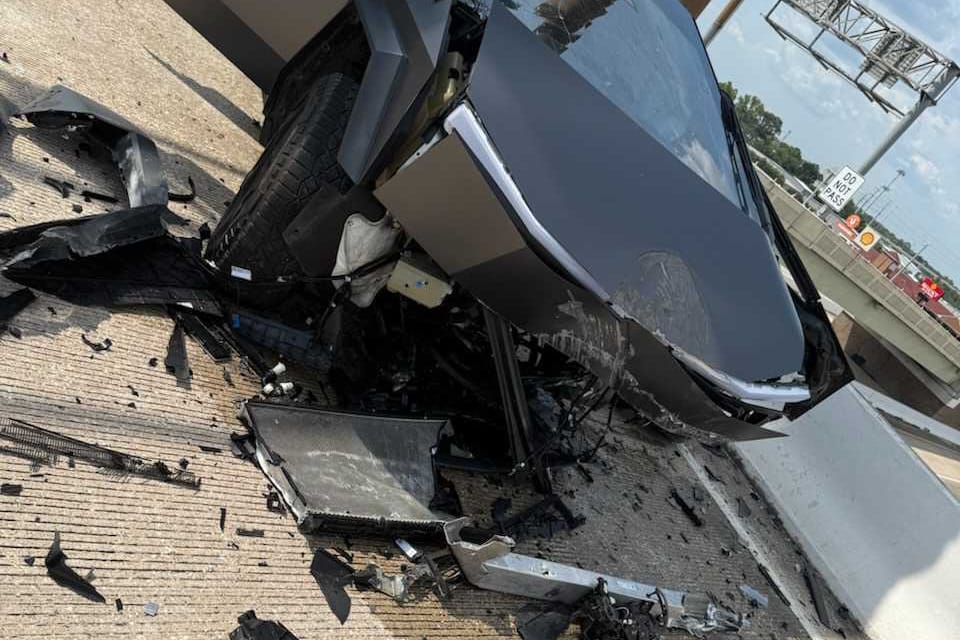

Hilliard shared a photo of the aftermath of the crash and dashcam footage with 404 Media. In the video, the Cybertruck proceeds down the highway and hops an intersection instead of turning to the right and following the road. It’s stopped when it slams into a signpost on the overpass.

The lawsuit blames the crash on Musk. “Elon Musk is an aggressive and irresponsible salesman, who has a long history of making dangerous design choices, and over-promising the features of his products,” the lawsuit said. “This promotion of products, for capabilities that they do not have, is the reason for this incident.”

Musk has spent the past few years prompting Tesla’s ability to drive itself, a feature that costs $99 a month and is sold as “Full Self-Driving.” But, the lawyers said, the FSD feature doesn’t work as advertised and it’s irresponsible of Tesla and Musk to market their vehicles as having the feature. “Despite this dangerous condition of Tesla’s ‘self-driving’ vehicles, Elon Musk and Tesla have made representations in the year 2019 that Tesla’s full ‘self-driving’ vehicles were fully operational and safe.”

Tesla and Musk have gotten in trouble for this before. In February, the company agreed it would stop using the terms “autopilot” and “full self-driving” when advertising its vehicles in California. There have been multiple fatal and non-fatal crashes involving Tesla vehicles running on autopilot, including a man who hit a parked police car in 2024. In August, a judge ordered Tesla to pay $200 million in punitive damages and another $43 million in compensatory damages to a family of a 22 year old who died in a crash involving the car’s Autopilot system.

According to the lawsuit, one of the reasons this keeps happening is because Musk intervened directly to make Teslas cheaper by using cameras instead of LiDAR, which uses laser light to create a 3D map of the surrounding area. “Elon Musk’s intervention into the design of Tesla vehicles has long been reckless and dangerous. While engineers at Tesla recommended the super-human vision of LiDAR be included for self-driving vehicles, and competitors like Waymo and Cruise relied heavily on LiDAR, Musk chose instead to rely only upon cheap video cameras,” the lawsuit said. “Musk referred to the LiDAR used by his safer competitors as expensive and unnecessary.”

Fully automated driving is a hard tech problem. LiDAR is better than basic cameras, but they’re still not perfect and LiDAR-based self-driving cars crash too. There are other problems too. In cities operating Google’s Waymo cars, passengers are leaving the doors open and Waymo is contracting DoorDashers to close them for $10 a pop, a Waymo in LA attempted to drive through a police standoff, and woman in San Francisco was trapped in a Waymo after men blocked the car and started to harass her.