In graduate school, my experimental archaeology professor told a student to create a door socket – the hole in a door frame that a bolt slides into – in a slab of sandstone by pecking at it with a rounded stone. After a couple of weeks, the student presented his results to the class. “I pecked the sandstone about 10,000 times,” he said, “and then it broke.”

This kind of experience is known as individual learning. It works through trial and error, with lots of each. Also known as reinforcement learning, it is how children, chimpanzees, crows and AI often learn to do something on their own, such as making a simple tool or solving a puzzle.

But individual learning has limits. No matter how much someone experiments through trial and error, improvement eventually hits a ceiling. Humans have been throwing javelins for a few hundred thousand years, yet performance has largely plateaued. At the 2024 Olympics in Paris, the gold medal javelin throw was about 5% shy of Jan Železný’s 1996 record. The level of expert play in the strategy game Go was essentially flat from 1950 to 2016, when artificial intelligence changed the equation.

Throughout humanity’s existence, these limits on individual learning have not applied to technology. Since IBM’s Deep Blue defeated world chess champion Garry Kasparov in 1997, supercomputers have become a million times faster – and now routinely outperform humans in chess and many other domains.

Why is technological improvement so different? My work as an anthropologist on cultural evolution and innovation shows that, unlike individual performance, technology advances through combination and collaboration. As more people and ideas connect, the number of possible combinations grows superlinearly. Technological innovation scales with the number of collaborators.

My new book with anthropologist Michael J. O’Brien, “Collaborators Through Time,” reveals these patterns across human existence. It traces how 2 million years of technological traditions progressed through collaboration among specialists, across generations and with other species.

Expertise has been the key. Because traditional communities know who their experts are, specialization and collaboration have consistently underpinned human success as a species.

I’d summarize our insight into how technology keeps advancing as TECH: tradition, expertise, collaboration and humanity.

Didier Descouens/Wikimedia Commons, CC BY-SA

Traditions and expertise – the critical foundation

The longest technological tradition documented by paleoanthropologists was the Acheulean hand axe. The multipurpose stone tool was made by our hominin ancestors for almost a million years, including some 700,000 years at a single site in eastern Africa. People produced Acheulean tools through techniques they learned, practiced and refined across generations.

Later, small prehistoric societies of modern humans thrived on millennia of specialized knowledge, such as music, thatched roofs, seed cultivation, burying dead bodies in bogs, and making millet noodles and even cheese suitable for interring with mummies.

As early as 22,000 years ago, communities near the Sea of Galilee stored and used more than a hundred plant species, including medicinal plants. Shamans – ritual experts in medicinal knowledge and caregiving – helped their groups survive. Archaeological evidence from burial sites suggests these specialists were widely revered across thousands of years: One shaman woman was interred with tortoise shells, the wing of a golden eagle and a severed human foot in a cave in Israel.

Collaboration – knowledge spanning time and place

Traditional expertise alone does not advance technology. Technological progress occurs when different forms of expertise are combined.

The wheel may have emerged from copper-mining communities. One expert sourced copper from the Balkans, another transported it, another smelted it. By about 4000 B.C., additional specialists cast copper into an early wheel-shaped amulet: shaping a wax model, encasing it in clay, firing it in a kiln, pouring molten metal into the mold, then breaking the mold away.

Transport technologies reshaped ancient product networks. As communities across Eurasia and Africa built wheeled vehicles and ships, and raised domesticated horses and other pack animals, collaboration expanded across continents. Maritime and overland trade linked blacksmiths, scribes, religious scholars, bead makers, silk weavers and tattoo artists.

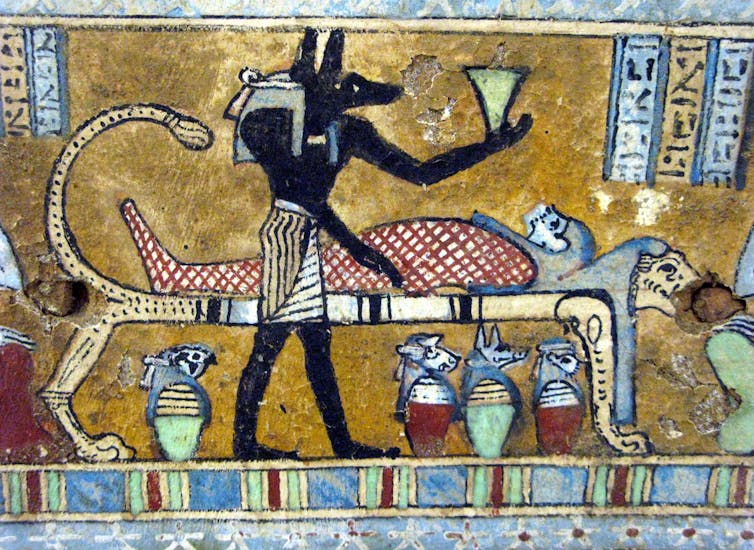

Expertise was often distributed between cities and their hinterlands, with cities functioning as hubs in cross-continental product networks. In ancient Egypt, no single community could produce a mummy. Mummification experts at Saqqara drew on a continental network that supplied oils, tars and resins, combining these materials with specialized techniques of antisepsis, embalming, wrapping and coffin sealing.

André/Wikimedia Commons, CC BY-SA

Around the world, states and empires – from the Indus Valley Civilization to the Vikings, Mongols, Mississippians and Incas – expanded these networks, serving as hubs that coordinated the exchange of raw materials, specialized knowledge and finished products. These exchanges could be highly specific: Chinese porcelain was shipped exclusively to 12th-century palaces in Islamic Spain via Middle Eastern traders who added Arabic inscriptions in gold leaf.

The scale has changed, but the structure has not. Today, within a global product space, an iPhone is assembled from a distributed network of specialized expertise and facilities.

Humanity – social learning

Today, AI may disrupt the millennia-long pattern of technological advancement through TECH. Most large language models generate statistically common responses, which can flatten culture and dilute expertise and originality. The risk grows as untapped high-quality training data – our reservoir of expertise – becomes scarcer.

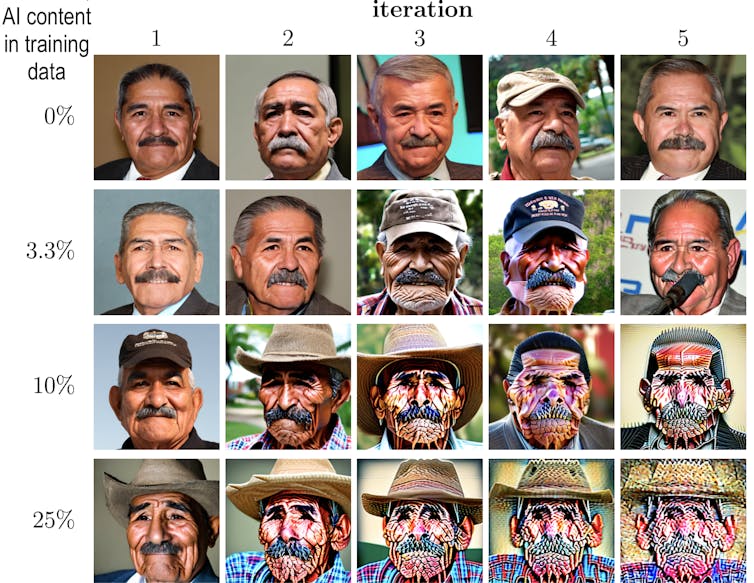

This creates a feedback loop: Models trained heavily on low-quality content may degrade over time, with measurable declines in reasoning and comprehension. Some scientists now warn that humans and large language models could become locked in a mutually reinforcing cycle of recycled, generic content, with brain rot for everyone involved. The dystopian extreme is AI model collapse, in which systems trained heavily on their own output begin to produce nonsense.

M. Bohacek and H. Farid, CC BY-SA

Brain rot is one reason some AI pioneers now question whether large language models will achieve human-level intelligence. But that, I think, is the wrong focus. The key to continually improving AI models is the same one that has sustained human expertise for millennia: keeping human experts in the loop – the E in TECH. Thanks to a kind of “pied piper” effect, an informed minority can guide an uninformed majority who copy their neighbors.

In a classic experiment, guppies, following their neighbors, ended up schooling behind a robotic fish that guided them toward food. A recent study showed that traffic congestion eases when autonomous vehicles make up as little as 5% of cars on the road. In both cases, a small, informed minority reshaped the behavior of the whole system.

Like humans, large language models are social learners, and the learning can go in either direction. Designers can increase the likelihood that models continue to improve by ensuring they incorporate the accumulated lessons of human expertise across history. In turn, this creates the conditions for people and models to learn from one another.

In the 2010s, DeepMind’s AlphaGo rediscovered centuries of accumulated human Go knowledge through individual learning, then went beyond it by crafting strategies no human had ever played. Human Go masters subsequently adopted these AI-generated strategies into their own play.

Well-trained large language models can likewise summarize vast bodies of scientific information, help talk people out of conspiracy thinking and even support collaboration itself by helping diverse groups find consensus. In these cases, the learning flows both ways.

From Acheulean hand axes to supercomputers, human innovation has always depended on tradition, expertise, collaboration and humanity. If AI is tuned to find and trust expertise rather than dilute it, it can become humanity’s next great technology – on par with ancient writing, markets and early governments – in our long story as collaborators through time.

![]()

R. Alexander Bentley does not work for, consult, own shares in or receive funding from any company or organization that would benefit from this article, and has disclosed no relevant affiliations beyond their academic appointment.